How Market Researchers Can Unlock AI to Boost Data Analysis and Insights

Researchers face heavy data workloads but are slowed by manual analysis. AI could help, but concerns around privacy, bias, and integration make adoption challenging—so the focus is on where it can be used effectively and safely.

Market research professionals are swimming in open-ends, trackers, and dashboards that need answers yesterday, yet the real drag is the same every time: slow, manual analysis, and inconsistent outputs. AI adoption challenges can feel like stepping onto thin ice when data privacy concerns and shifting regulations sit alongside real methodology challenges about bias, transparency, and defensible reasoning. Add messy technology integration across tools, vendors, and internal teams, and even small improvements in data processing optimization can look out of reach. The opportunity is learning where AI genuinely fits in the research workflow, with confidence and control.

Understanding the AI Foundations That Stick

AI only helps research when the basics match your workflow. Start with artificial intelligence (AI) as software that can learn patterns, then focus on the few machine learning ideas that affect coding, cleaning, tagging, and summarizing. From there, map the support system: data engineering to move and shape data, governance to set rules and accountability, and cybersecurity to keep respondents and clients protected.

This matters because confidence beats hype when you are presenting results to stakeholders. When you pair core AI skills with clear guardrails, teams collaborate faster, share standards, and build credibility through consistent outputs. It also makes professional development easier because you can choose training that fits your role and aligns with recognized certifications, including information technology studies.

Think of it like building a research kitchen. AI is the new appliance, but you still need clean ingredients, labeled storage, and safe handling. When data-driven methodologies become the norm, your workflow stays repeatable even as tools change.

With the foundation set, practical automation and repeatable workflows become safe to pilot.

Turn AI Into Workflow Wins: 5 Practical Ways to Start

You don’t need to rebuild your tech stack to get real value from AI. The trick is to start where AI can reliably reduce manual work or improve consistency, then expand once your data, governance, and security basics are steady.

Pick one “boring” workflow to automate first: Start with a repeatable, low-risk task like cleaning open-ends (deduping, spell correction), tagging themes, or generating a first-pass topline summary. Define “done” in plain language and set a before/after metric such as minutes saved per project or inter-coder agreement. This is business data automation at its best: small scope, clear quality checks, and easy rollback if outputs drift.

Create a reusable “ML-ready dataset” pattern: Choose one core analysis dataset you build often (e.g., tracker waves, U&A, brand health) and standardize it: consistent variable names, codeframes, missing-value rules, and a simple data dictionary. Then build a repeatable machine learning workflow around it: version the dataset, document transformations, and run the same evaluation steps each time. You’ll move faster because you’re not re-solving the same data engineering problems every study.

Add AI where it supports researchers, not replaces judgment: Use AI integration strategies that keep humans in the loop, AI drafts, you approve. For example, have AI propose segments, drivers, or themes, then require a reviewer to validate with cross-tabs, raw verbatims, and logic checks before anything reaches stakeholders. This approach protects methodological integrity while still cutting the “blank page” time.

Pilot predictive analytics with one decision your team already makes: Pick a practical AI use case tied to an operational choice, forecasting churn risk for a panel, predicting likelihood-to-recommend from experience attributes, or identifying which concepts will clear a purchase-intent threshold. Keep it simple: one outcome variable, a short feature set you can explain, and a holdout test to avoid fooling yourself. The growing focus on data-driven decisions is exactly why this kind of small, decision-led pilot tends to land well internally.

Choose data analysis tools that fit your access and governance reality: If your org is already cloud-forward, prioritize workflows that can run where the data lives, less exporting, fewer copies, cleaner permissioning. Industry reporting has highlighted that the cloud segment dominated, and in practice, that often translates to easier scaling and collaboration for analytics teams. Either way, set a minimum bar: role-based access, audit trails, and a clear retention policy before you automate anything with respondent data.

When you start small, standardize inputs, and keep humans accountable for the final call, AI becomes a steady helper instead of a risky experiment. Those same habits also make it much easier to answer the hard questions about privacy, bias, and credibility with confidence.

AI in Market Research: Privacy and Credibility FAQs

If you’re weighing risk before momentum, these are the questions that matter.

Q: What’s the safest way to use AI with respondent data under privacy rules?

A: Start by minimizing exposure: remove direct identifiers, limit fields to what the task truly needs, and keep processing inside approved environments. Treat consent as a living requirement, since consent for data processing must be specific to the purpose and updated if your use changes.

Q: How do we prove AI outputs are compliant and auditable?

A: Build a simple audit trail: data source, prompt or model settings, version of the dataset, reviewer name, and what was accepted or edited. Many teams formalize this with checklists and tooling because the AI governance platforms market is expanding quickly as compliance expectations rise.

Q: Can AI coding of open-ends still meet methodological integrity standards?

A: Yes, if you treat AI as a first pass and keep humans responsible for the final codeframe. Require spot checks against raw verbatims, monitor agreement over time, and document any rule changes.

Q: When should we avoid using AI in a study?

A: Skip it when the data is highly sensitive, consent is unclear, or the client requires fully manual methods. A practical alternative is using AI only on synthetic or heavily masked training samples.

Q: How do I respond when stakeholders fear “black box” insights?

A: Lead with transparency: show variables used, provide holdout performance, and include plain-language rationale for drivers and segments. Offer a short validation appendix so decision-makers can see how conclusions were checked.

You can move faster with AI while still protecting people, process, and trust.

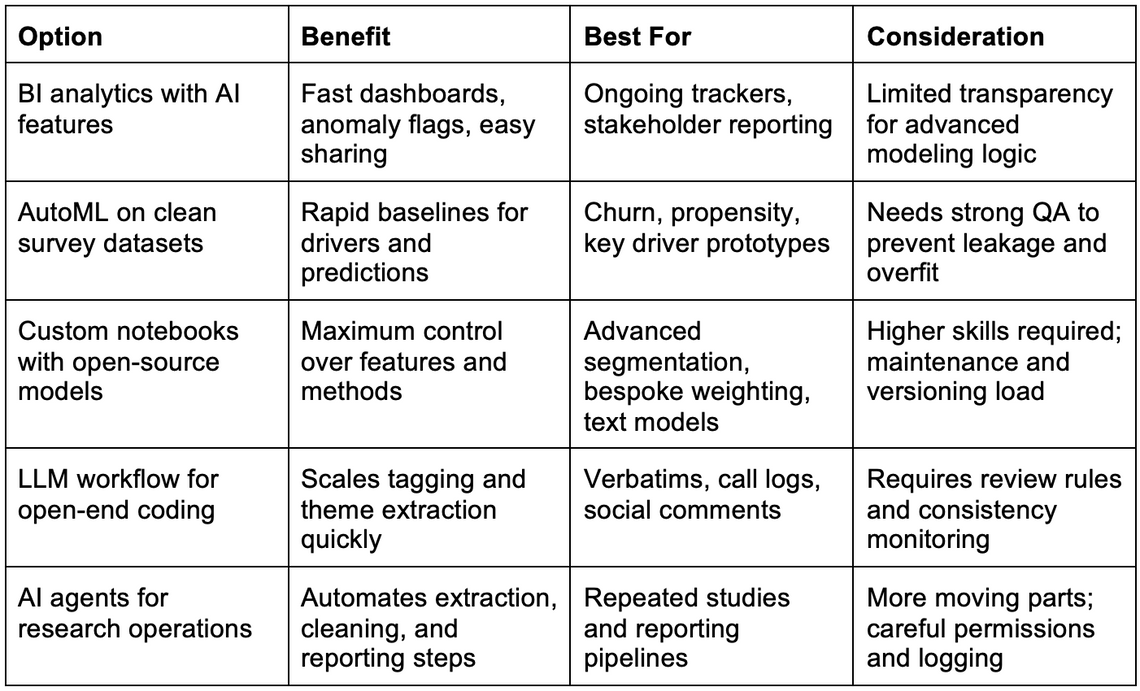

AI Options Compared for Market Research Analysis

Here’s a quick side-by-side view.

This framework compares common AI approaches market research teams use to speed analysis without losing rigor. It matters because the “right” choice is less about hype and more about fit: your data sensitivity, audit needs, budget, and how much your team wants to build versus buy. Since 70% of total company software use is often attributed to SaaS, it helps to evaluate both off-the-shelf and custom paths with the same yardstick.

Use the table as a selection checklist: start with how repeatable the work is, then choose the level of control you actually need. When in doubt, pilot two options on the same dataset and compare time saved, error rates, and explainability. Knowing which option fits best makes your next move clear.

Next, we’ll translate these choices into the most realistic adoption wins you can expect.

Take a Small AI Step to Strengthen Research Insights

Market researchers are under pressure to move faster, but nobody wants to gamble on quality, governance, or credibility just to “use AI.” The steady path is the one this guide supports: start with practical AI adoption benefits, choose tools that fit your constraints, and treat AI like an assistant for data optimization results, not a replacement for judgment. When that mindset sticks, analysis gets sharper, decisions land with more confidence in AI use, and the story you bring to stakeholders supports real business performance improvement. Adopt AI in small, verifiable steps, and your insights get faster without getting flimsy. Pick one repeatable analysis task this week and pilot an AI-assisted workflow, then capture what improved for your professional development. That kind of market research innovation builds a more resilient insights function that keeps delivering even when timelines tighten.