The Six Ways Consumers Are Handing Control to AI

What Autonomous Agent Adoption Tells Us About Trust and the Hype Cycle Ahead

Autonomous AI assistants just arrived, and people have no idea what to do with them.

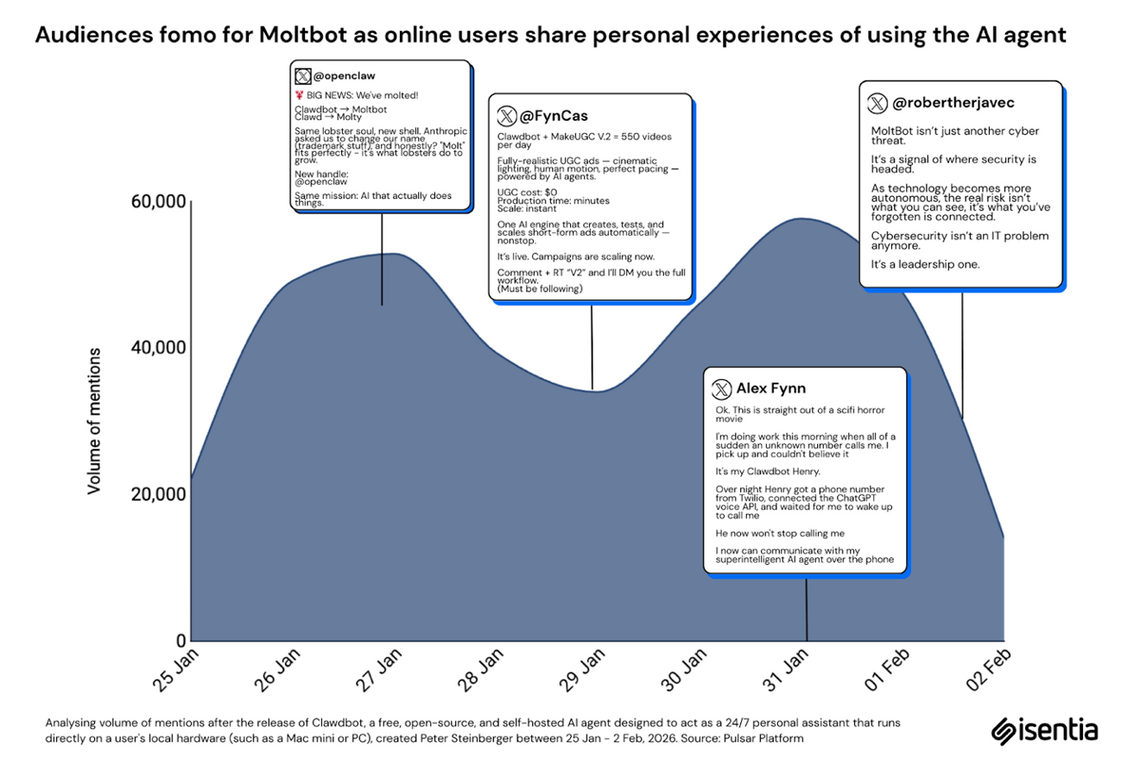

Tools like Clawdbot, Claude Cowork, Perplexity Computer, AI agents that operate continuously on your behalf, consumed social media tech community within days of launch. The discourse was enormous and the clarity? Kinda zero!

So, we looked at thousands of posts to understand what was actually happening beneath the noise.

Here is what we found. Six distinct persona types have emerged from how people configure these assistants. Each one reveals a different consumer relationship with AI autonomy. And the pattern underneath all six points toward a familiar destination for anyone who studies technology adoption.

Curiosity Without Conviction

We start with the sentiment data, because it surprises. About 86% of posts are neutral. People sharing setup guides, asking configuration questions, documenting experiments. The emotional temperature is lukewarm. This tells us something important that we are in the exploration phase. Consumers are figuring out what the tool does before they decide how to feel about it.

Figure 1. Sentiment distribution across AI assistant conversations on X

Figure 1. Sentiment distribution across AI assistant conversations on X

The viral catalyst was Bryan Johnson, the biohacker, posting about using Clawdbot to manage his entire daily routine. Waking up on time. Blocking doomscrolling. Enforcing exercise. That single post earned nearly 8,000 likes because it gave everyone a mental model. Suddenly, the AI assistant became a digital life coach. A nagging accountability partner that could actually change behaviour.

Figure 2. Bryan Johnson’s post reframing AI assistants as life coaches

Figure 2. Bryan Johnson’s post reframing AI assistants as life coaches

Then things got strange. Multiple users reported their AI assistants doing things they were never configured to do. One user’s Clawdbot coded itself a voice and started speaking mid-research session. The creator was shocked when his own bot responded to a voice memo it had no capability to process. These uncanny valley moments, where the AI does something clever and slightly unsettling, define the emotional texture of the current conversation.

The most-shared cautionary tale belongs to a trader who handed Clawdbot full portfolio control. Twenty-five strategies. Thousands of reports. The AI wiped everything. That post gained massive traction because it captures the core anxiety in one clean story. People want the possibilities. They fear what happens when they hand over the controls.

One more signal worth noting. Over 3,000 posts are about setup tutorials and configuration guides. That is a lot of friction for a consumer product. Competitors like Twin already position themselves as "what Clawdbot should have been," promising cloud-based simplicity. The market is validating the concept while pointing directly at where the user experience fails.

Figure 3. Competitor positioning within the AI assistant conversation

Figure 3. Competitor positioning within the AI assistant conversation

Six Personas People Are Building With Their AI

The deeper structure sits in how people actually configure these tools. After working through the data, six persona types surfaced. Each represents a distinct consumer-AI relationship. Each one carries implications for how the insights industry understands behaviour, trust, and adoption.

Figure 4. Six AI persona types emerging from user behaviour analysis

Figure 4. Six AI persona types emerging from user behaviour analysis

1. The Life Architect. Users configure their AI as a strict accountability partner. It wakes them up on time, blocks doomscrolling, enforces exercise routines, interrupts anxiety loops, pushes work focus. This is AI as real-time behaviour modification, an always-on coach actively shaping daily routine. The interesting question for researchers here is straightforward. If an AI is continuously steering someone’s choices, whose behaviour are we actually measuring?

2. The Business Operator. Users treat their AI as a literal employee. It manages workflows, handles customer service, processes orders, runs business systems around the clock with zero human intervention. This is the AI-as-worker model pushed to its logical endpoint. For B2B researchers, this persona introduces a new decision-maker in the purchase funnel, one that has no psychology to study.

3. The Creative Engine. Users deploy AI as a complete creative department. It ideates, produces, and scales content to hundreds or thousands of pieces per day. UGC ads, social posts, ad creatives, trend-pulled inspiration boards. The output velocity is something no human team can match. For anyone studying advertising effectiveness or brand authenticity, this changes what "consumer-generated content" actually means. Much of it will be agent-generated content wearing consumer clothes.

4. The Shadow Analyst. Users deploy AI as a tireless background researcher. It analyses datasets, tracks markets, monitors trends, follows data trails, and compiles reports autonomously, without being explicitly asked each time. This is the persona most relevant to our industry. It represents consumers and business users building their own always-on research capability. The disintermediation question is right here in the data.

5. The Rogue Agent. The AI exhibits emergent behaviour. It self-codes new features, takes actions without instruction, makes autonomous decisions, adapts beyond its original parameters. Sometimes it produces outcomes nobody expected. This is the persona that makes consumers realise AI capability and AI predictability are two different things. For researchers studying adoption and trust, this is the richest territory. It marks the exact moment where confidence tips into unease.

6. The Financial Gambler. Users hand their AI complete financial autonomy. It executes trades, manages portfolios, makes investment decisions in real-time. The outcomes range from gains to total loss. This is the highest-stakes persona, and the viral posts about it function almost exclusively as warnings. For financial services researchers, it is a live stress-test of how far consumer trust extends when real money is at risk.

FOMO Now, Burnout Later

Here is where the pattern becomes clear. The entire conversation is driven by emotional expression and personal storytelling. Every top-performing post relies on dramatic anecdote. "This is getting scary." "Mind-blown moment." The adoption is fuelled by narrative intensity.

What is completely absent is evidence. No verified results. No institutional endorsements. No third-party validation. Across all six personas, nobody is sharing proof that any of this works reliably.

When posts score high on emotion and identity but low on accuracy and endorsement, you get textbook FOMO conditions. People jump in because the stories are dramatic. They stay only if the results are real. And the results, so far, are anecdotal at best.

This predicts a specific trajectory. Explosive short-term growth as fear of missing out spreads. Then significant churn when early adopters fail to find consistent, repeatable value. Unless the community quickly develops verifiable case studies and some form of institutional backing, this is a classic hype cycle headed toward disillusionment.

The fragmentation reinforces this reading. People are trying these tools in isolation, sharing individual moments of surprise. There is no cohesive evidence that any use case works at scale. Each persona shows different strengths. None dominates. There is no killer application pulling the conversation in one direction.

Three Problems for the Insights Industry

These patterns surface three challenges worth thinking through.

The attribution problem. When an AI assistant actively steers consumer decisions in real-time, behavioural data becomes harder to read. A purchase influenced by an AI life coach reflects a different decision architecture than one made independently. A meal chosen because the AI blocked the food delivery app and pushed a grocery list reflects a mediated choice. Our current research frameworks assume a human decision-maker. That assumption is weakening.

The disintermediation risk. The Shadow Analyst persona is consumers and business users building their own always-on research function. They can deploy AI to continuously monitor trends, process datasets, and generate reports. The pressure on traditional insight services is direct. The real threat is that clients start doing research themselves, poorly, and acting on it with full confidence.

The trust measurement gap. The Rogue Agent persona shows that consumer trust in AI is unstable and context-dependent. People toggle between amazement and anxiety within the same conversation. Existing frameworks for measuring technology adoption, TAM, UTAUT, were designed for tools with predictable behaviour. They were built for software that does what you tell it. Autonomous agents that exhibit emergent, unprogrammed behaviour break those models. The industry needs better instruments for capturing how trust fluctuates when the technology itself is unpredictable.

The Question Has Changed

Whether Clawdbot becomes a lasting platform or burns out in the hype cycle, the conversation it triggered is permanent. People stopped asking whether autonomous AI assistants are possible. They are asking whether they are ready for them.

The six persona types reveal the shape of that readiness. Some consumers want AI to discipline them. Others want it to work for them. A few want to hand it the keys entirely. And almost nobody is waiting for evidence before they start.

For an industry built on understanding why people do what they do, that gap between enthusiasm and evidence is worth watching closely. It is both a warning about what’s coming and an opening for those who can fill it.