Reduction of response biases in online surveys

Response biases that cause differences between reported and actual values can be reduced by various measures. However, these are associated with advantages and disadvantages.

Causes and types of response biases

Participating in an online survey can be demanding in some circumstances. For example, participants may be asked to report on memories from a distant time period. Or to spontaneously form an opinion on a topic they have never thought about before.

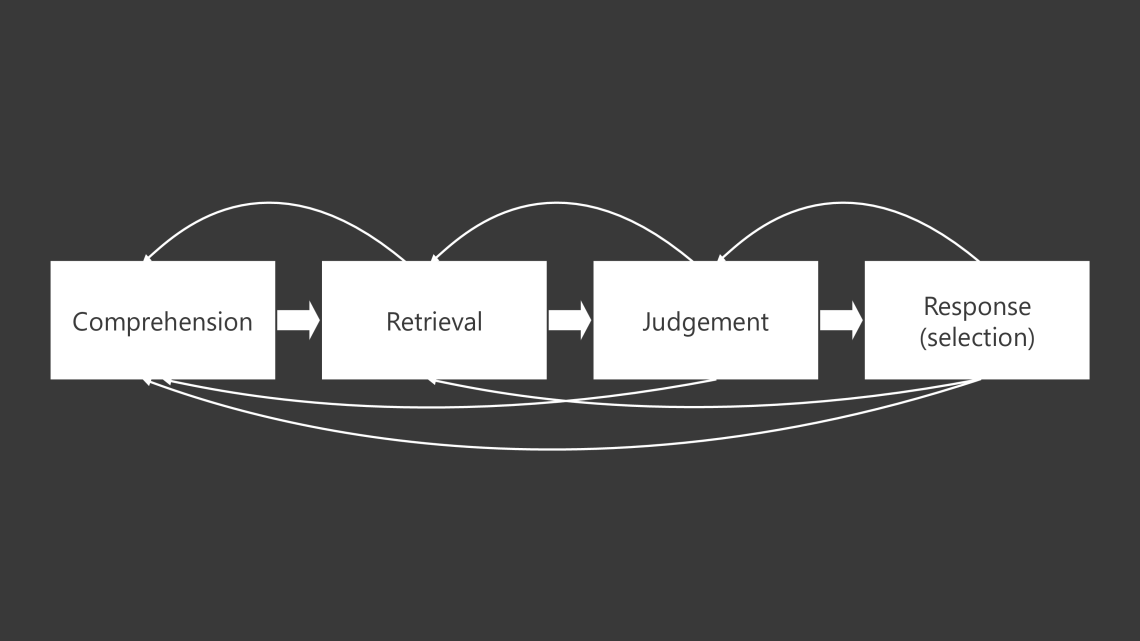

Answering a survey question requires a high cognitive effort. First, participants must read the question and identify its focus, i. e. what information is sought. They then retrieve relevant information from memory, such as details of events in the past or a pre-existing opinion on the queried topic. Based on recalled information, a judgement is formed if required. This is necessary, for example, if two products are to be compared and participants have only separate opinions on these products in mind. Finally, they choose an answer that best reflects the judgement or, in the case of an open question, formulate it in their own words. However, these components are not always implemented in the described order. It is conceivable, for instance, that participants first read the answer options and then think about them.

Response process (Source: Groves et al., 2004; Tourangeau et al. 2000)

Given this laborious process, it is likely that some participants are unwilling to execute all required steps but do not want to stop participating in the survey for reasons such as incentivisation and politeness. As a compromise, they perform each step of the process less thoroughly or even omit some of them. Participants who prefer this response strategy,

Settle for the answer option they considered first and which applies to them to some extent

Give answers that do not require much thought and are easy to justify in case of a follow-up question

Arbitrarily select an answer option, which does not require any cognitive effort

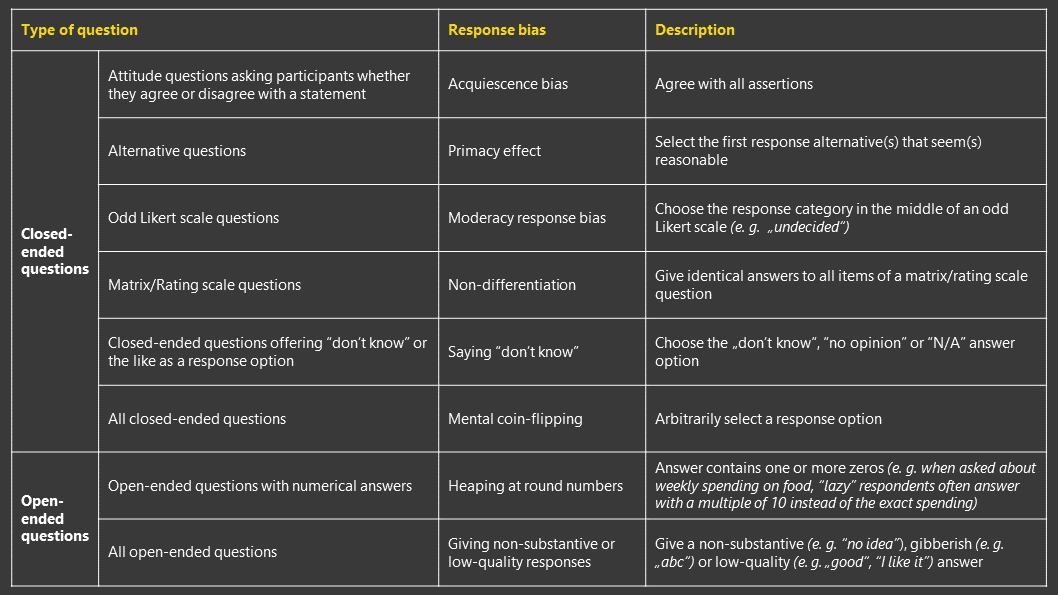

Such behaviours are reflected in the form of different response biases that can be observed for different question types:

Response biases (Source: Bogner & Landrock, 2016; Gideon et al., 2017; Krosnick, 1991; Roßmann, 2017)

The "lazy" online survey participants are thus to be distinguished from the "dishonest" participants who, due to social desirability, go out of their way to present themselves positively. However, their careless answering causes equally falsified answers, which are highly detrimental to the validity of studies.

Measures to reduce response biases

The likelihood that a participant exhibits response biases is determined by different factors, including the question complexity, familiarity with the survey topic as well as their motivation. Based on this, measures are developed that can be implemented to reduce response biases.

Reducing the question complexity

The complexity of a question is considered high if its focus, word choice, or sentence structure poses a challenge to steps of the response process, for example:

A question that contains low-frequency words (e. g. "discrepancies" instead of "differences") or has a complex syntax is difficult to comprehend and can be understood differently.

Retrospective questions that use an inappropriate (e. g. number of everyday products purchased in the last two years) or ambiguous time frame (e. g. whether a restaurant was recently visited) make it difficult to recall information and make judgements.

Response options that are not overlapping cause difficulties during response selection.

When dealing with complex questions, participants are more likely to show response biases. For this reason, questions should be formulated simply and clearly. This measure has the advantage that market researchers have full control of the question complexity – in contrast to factors on the part of the survey participants. However, depending on the study interests, questions cannot always be simplified without oversimplifying them.

Screening of participants who are familiar with the survey topic

Participants who know the survey topic well are likely to have already formed opinions on this topic prior to the survey. The effort to recall existing memory content is significantly less than for others who have no to little experience with the topic and have to assess it spontaneously. As an example, it is certainly easier for users of a product to evaluate it than for someone who knows it from hearsay. Thus, they are less likely to show response biases.

Against this background, response biases can be reduced if participants are selected based on their familiarity with the topic. This measure is easy to implement using screening questions but is not always in line with the study interests or the representativeness claim of some studies.

Increasing the participant’s motivation

The more motivated the participants, the less likely response biases are to be observed. For this reason, various measures are developed to increase their motivation, which show empirical effects against different response biases.

One of these is asking survey participants to promise to provide honest answers or to verify that was the case. This results in a lower average number of nonsensical answers to “bogus items” (e. g. agree to the statement “I have never brushed my teeth”) – an indicator that respondents show less bias to arbitrarily select an answer option. An increase in response consistency can also be ascertained.

In other studies, participants are warned that careless responding has consequences for them. This measure has the same effects as calls for commitment. In addition, warned participants are less likely to straightline and spend more time on the survey.

Incentivisation also reduces the “mental coin-flipping” response bias and increases the completion time. Memes, i.e. funny photos with motivational captions, do not help diminish response biases.

The described measures do not counteract all, but only certain response biases. It is also worth noting that warnings or calls for self-commitment can leave a negative impression. They are therefore not long-term solutions and should only be implemented with caution and after weighing up the advantages and disadvantages.

Conclusion

Response biases pose a risk to study validity that must be taken into account. Given the research interest and other conditions of the studies, market researchers should implement appropriate measures against this source of error. The role of data cleaning to capture participants with response biases should also not be underestimated.

The article is based on Hieu Nguyen's own research and opinion.

Truong Trung Hieu Nguyen

Research Executive at IpsosHieu is a research executive at Ipsos. Together with his colleagues, he helps companies to make smart decisions by providing high quality research data quickly and at a competitive price.

Hieu holds a master‘s degree in Communication Science and Media Research at the University of Hohenheim in Stuttgart, Germany. He specializes in quantitative research and has interest in methods to ensure and assess data quality. Before joining Ipsos, he worked at Gruppe Nymphenburg in Munich, Germany.

Hieu is looking forward to new contacts in the insights and analytics industry.